Show your users exactly which words need work.

Pronunciation scoring API

Send audio with a reference sentence, get back per-word accuracy,

timing, and structured signals your product can use.

One API call. Under 30ms. No vendor lock-in.

Choose your evaluation path

Most teams only need one of three paths: try the product, check partner fit, or inspect the API surface.

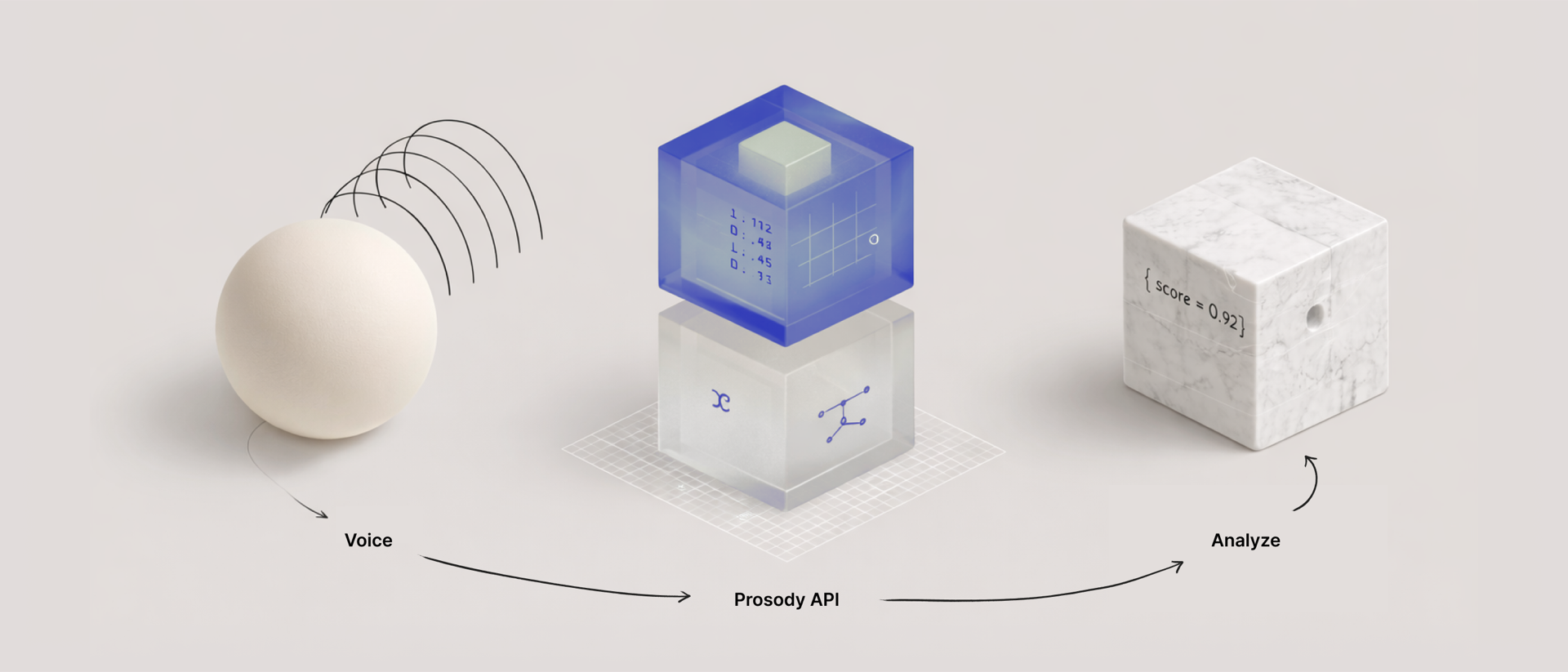

How it works

Audio + reference text

GPU phoneme alignment with word timing and mismatch awareness.

Structured scoring layer

Per-word scores, phoneme detail, timing, and script adherence.

Product-ready feedback

Coaching UI, assessment rules, QA review, or human-in-the-loop workflows.

# Score a recording with curl curl -X POST https://api.prosody.studio/v1/scores \ -H "X-API-Key: $PROSODY_API_KEY" \ -H "Content-Type: application/json" \ -d '{ "audio_data": "'$(base64 < recording.wav)'", "sample_rate": 16000, "language": "en-US", "reference_text": "The quick brown fox jumps over the lazy dog" }'

// TypeScript SDK import { ProsodyClient } from "@prosody/sdk"; const client = new ProsodyClient({ apiKey: process.env.PROSODY_API_KEY }); const result = await client.score({ audio: readFileSync("recording.wav").toString("base64"), language: "en-US", referenceText: "The quick brown fox jumps over the lazy dog" });

# Score a recording with Python import requests, base64 with open("recording.wav", "rb") as f: audio = base64.b64encode(f.read()).decode() resp = requests.post( "https://api.prosody.studio/v1/scores", headers={"X-API-Key": PROSODY_API_KEY}, json={ "audio_data": audio, "sample_rate": 16000, "language": "en-US", "reference_text": "The quick brown fox jumps over the lazy dog", }, ) result = resp.json()

{

"scores": {

"pronunciation": 72.4,

"script_adherence": 100.0,

"overall": 72.4

},

"words": [

{

"word": "the",

"status": "match",

"acoustic_match": 68.1,

"timing": { "start": 0.12, "end": 0.24, "duration_ms": 120 },

"phonemes": [

{ "detected": "DH", "acoustic_match": 71.2, "timing": { "start": 0.12, "end": 0.18 } },

{ "detected": "AH", "acoustic_match": 65.0, "timing": { "start": 0.18, "end": 0.24 } }

]

}

]

}

Built and validated

Scoring latency measured per-word (165ns–822ns). Word recognition validated on L2-ARCTIC (275/275 words). Public evaluation is batch-first and English-first today. Prosody pricing is $0.004/min (pricing details).

Best fit

Prosody is strongest when your product already knows what the speaker was supposed to say.

Best for

- Scripted read-aloud and oral assessment

- Guided pronunciation coaching

- QA flows on known prompts or transcripts

Not for

- Generic speech-to-text replacement

- Open-ended conversation grading

- Broad voice-agent stacks without reference text

Running a real partner evaluation? Start with the partner guide for fit, integration path, and a suggested checklist.

Why Prosody

| Capability | Prosody | Azure Speech | Pronunciation API vendors |

|---|---|---|---|

| Primary use case | Guided speech workflows | Broad speech suite | Assessment products with API surfaces |

| Integration posture | REST-first + SDK | SDK-first workflow | Varies by vendor |

| Output granularity | Word + phoneme + timing | Word + phoneme | Usually word-level plus summaries |

| Control | Vendor-neutral architecture | Azure-locked | Closed cloud |

| Pricing clarity | $0.004/min published | ~$0.022/min list price (comparison) | Mixed public and quote-led pricing |

- Reference-guided by design. Built for scripted read-aloud, assessment, coaching, and QA flows where your product already knows the expected utterance.

- Focused, not generic. We are not trying to be the entire speech stack. We own the alignment + pronunciation signals layer and keep the integration surface small.

- Private by default. Audio is processed inside Prosody infrastructure with no third-party speech API hop.

-

SDK + HTTP. Start with curl, or move straight to

@prosody/sdkfor typed clients, validation, and browser audio utilities.npm install @prosody/sdk

What we're building next

The alignment and scoring layer is live today. On top of that

foundation we are training our own pronunciation models in Rust,

adding L1-aware coaching that adapts feedback to a speaker's native

language, and building the event hooks (onMispronounce,

onMonotone) that let products react to speech signals

in real time. That intelligence layer is M2 — actively in

development now.

The full roadmap runs through eight milestones: noise robustness, L1 detection, learner modeling, on-device inference, and more. See the overview for where things stand.

About Prosody

I started Prosody because every language app I looked at either used Azure's expensive SDK or built pronunciation scoring from scratch. Neither option made sense. There should be a clean, fast layer that any product can call — send audio, get back exactly what the speaker got right and wrong, and move on.

Prosody is built in Rust, runs entirely on our own infrastructure, and never sends audio to a third party. We publish our pricing, our accuracy numbers, and our latency contracts because we think infrastructure earns trust by being transparent, not by hiding behind sales calls.

New York · francois@prosody.studio